12.3: The Regression Equation

- Page ID

- 29629

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

48

The Regression Equation

jkesler

Data rarely fit a straight line exactly. Usually, you must be satisfied with rough

predictions. Typically, you have a set of data whose scatter plot appears to “fit” a

straight line. This is called a Line of Best FitorLeast-Squares Line.

Example 12.6

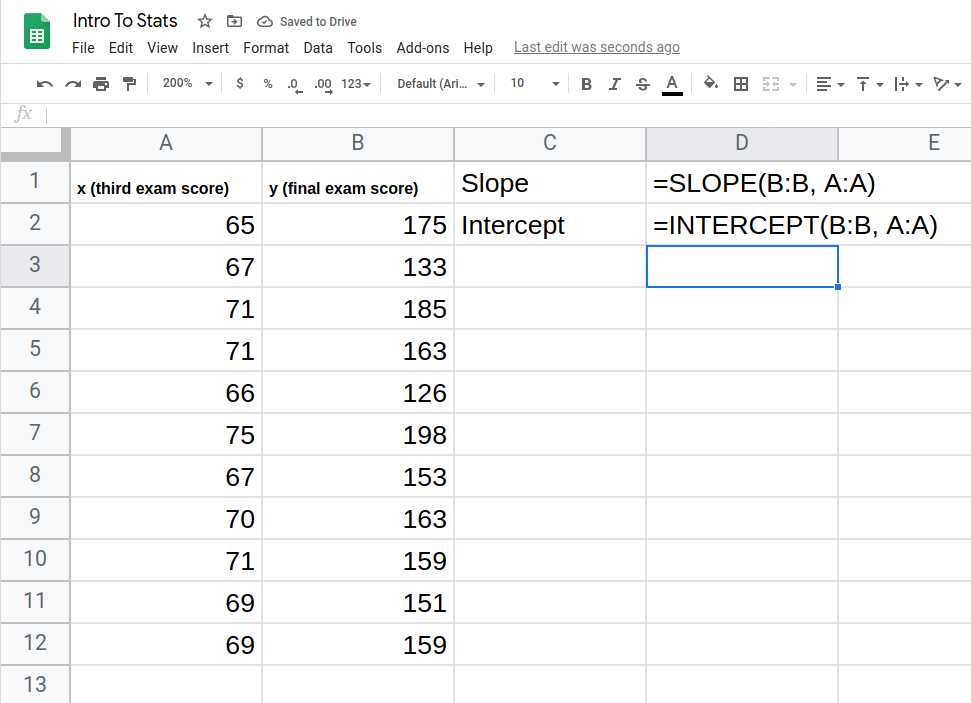

A random sample of 11 statistics students produced the following data, where x is the third exam score out of 80, and y is the final exam score out of 200. Can you predict the final exam score of a random student if you know the third exam score?

| x (third exam score) | y (final exam score) |

|---|---|

| 65 | 175 |

| 67 | 133 |

| 71 | 185 |

| 71 | 163 |

| 66 | 126 |

| 75 | 198 |

| 67 | 153 |

| 70 | 163 |

| 71 | 159 |

| 69 | 151 |

| 69 | 159 |

Try It 12.6

SCUBA divers have maximum dive times they cannot exceed when going to different depths. The data in Table 12.4 show different depths with the maximum dive times in minutes. Use your calculator to find the least squares regression line and predict the maximum dive time for 110 feet.

| X (depth in feet) | Y (maximum dive time) |

|---|---|

| 50 | 80 |

| 60 | 55 |

| 70 | 45 |

| 80 | 35 |

| 90 | 25 |

| 100 | 22 |

The third exam score, x, is the independent variable and the final exam score, y, is the dependent variable. We will plot a regression line that best “fits” the data. If each of you were to fit a line “by eye,” you would draw different lines. We can use what is called a least-squares regression line to obtain the best fit line.

Consider the following diagram. Each point of data is of the the form (x, y) and each point of the line of best fit using least-squares linear regression has the form (x, ŷ).

The ŷ is read “y hat” and is the estimated value of y. It is the value of y obtained using the regression line. It is not generally equal to y from data.

The term y0 – ŷ0 = $\varepsilon$0 is called the “error” orresidual. It is not an error in the sense of a mistake. The absolute value of a residual measures the vertical distance between the actual value of y and the estimated value of y. In other words, it measures the vertical distance between the actual data point and the predicted point on the line.

If the observed data point lies above the line, the residual is positive, and the line underestimates the actual data value for y. If the observed data point lies below the line, the residual is negative, and the line overestimates that actual data value for y.

In the diagram in Figure 12.10, y0 – ŷ0 = $\varepsilon$0 is the residual for the point shown. Here the point lies above the line and the residual is positive.

$\varepsilon$ = the Greek letter epsilon

For each data point, you can calculate the residuals or errors, yi – ŷi = $\varepsilon$i for i = 1, 2, 3, …, 11.

Each |$\varepsilon$| is a vertical distance.

For the example about the third exam scores and the final exam scores for the 11 statistics students, there are 11 data points. Therefore, there are 11 $\varepsilon$ values. If you square each $\varepsilon$ and add, you get

$$(\epsilon_1)^2 + (\epsilon_2)^2 + \cdots + (\epsilon_{11})^2 = \sum_{i=1}^{11} \epsilon^2$$

This is called the Sum of Squared Errors (SSE).

Using calculus, you can determine the values of a and b that make the SSE a minimum. When you make the SSE a minimum, you have determined the points that are on the line of best fit. It turns out that the line of best fit has the equation:

$$\hat y = a +bx$$

where $a=\bar y -b \bar x$ and $b = \frac{\sum(x-\bar x)(y-\bar y)}{\sum(x-\bar x)^2}$

The sample means of the x values and the y values are $\bar x$ and $\bar y$, respectively. The best fit line always passes through the point $(\bar x, \bar y)$.

The slope b can be written as $b = r\left( \frac{s_y}{s_x} \right)$ where sy = the standard deviation of the y values and sx = the standard deviation of the x values. r is the correlation coefficient, which is discussed in the next section.

Least Squares Criteria for Best Fit

The process of fitting the best-fit line is called linear regression. The idea behind finding the best-fit line is based on the assumption that the data are scattered about a straight line. The criteria for the best fit line is that the sum of the squared errors (SSE) is minimized, that is, made as small as possible. Any other line you might choose would have a higher SSE than the best fit line. This best fit line is called the least-squares regression line .

Computer spreadsheets, statistical software, and many calculators can quickly calculate the best-fit line and create the graphs. The calculations tend to be tedious if done by hand. Instructions to use Google Sheets to find the best-fit line and create a scatterplot are shown at the end of this section.

THIRD EXAM vs FINAL EXAM EXAMPLE:

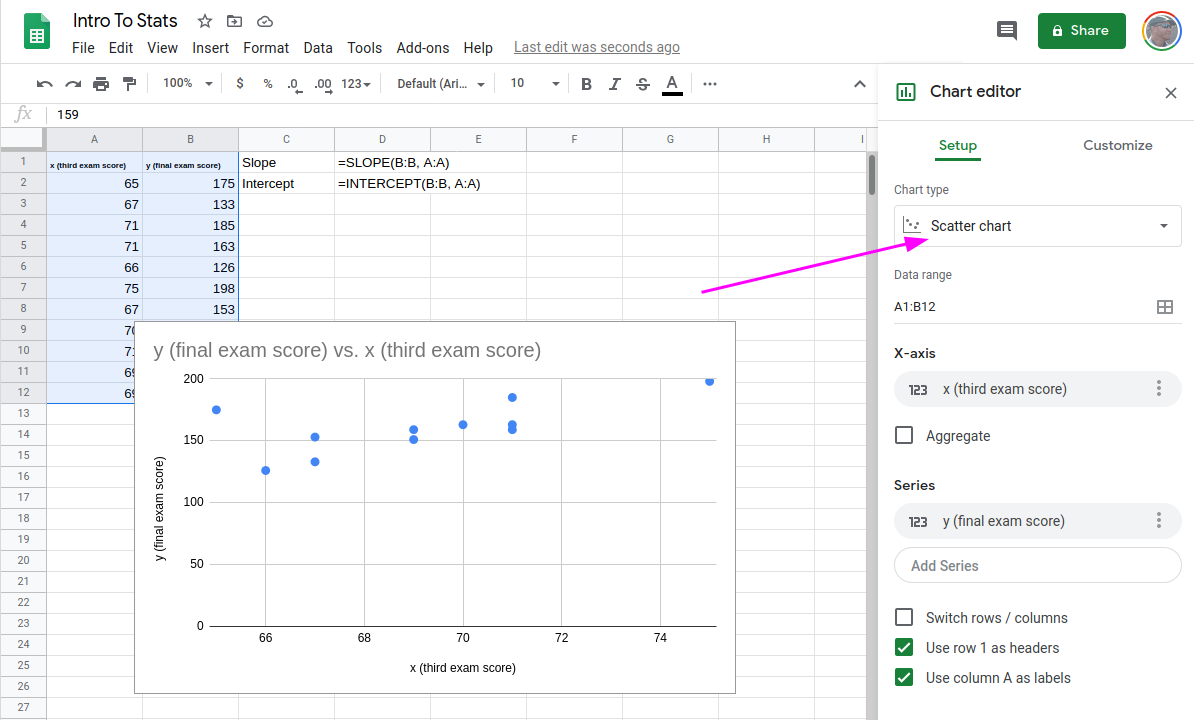

The graph of the line of best fit for the third-exam/final-exam example is as follows:

The least squares regression line (best-fit line) for the third-exam/final-exam example has the equation:

$$\hat y = -173.51+4.83x$$

Reminder

Remember, it is always important to plot a scatter diagram first. If the scatter plot indicates that there is a linear relationship between the variables, then it is reasonable to use a best fit line to make predictions for y given x within the domain of x-values in the sample data, but not necessarily for x-values outside that domain. You could use the line to predict the final exam score for a student who earned a grade of 73 on the third exam. You should NOT use the line to predict the final exam score for a student who earned a grade of 50 on the third exam, because 50 is not within the domain of the x-values in the sample data, which are between 65 and 75.

UNDERSTANDING SLOPE

The slope of the line, b, describes how changes in the variables are related. It is important to interpret the slope of the line in the context of the situation represented by the data. You should be able to write a sentence interpreting the slope in plain English.

INTERPRETATION OF THE SLOPE: The slope of the best-fit line tells us how the dependent variable (y) changes for every one unit increase in the independent (x) variable, on average.

THIRD EXAM vs FINAL EXAM EXAMPLE

Slope: The slope of the line is b = 4.83.

Interpretation: For a one-point increase in the score on the third exam, the final exam score increases by 4.83 points, on average.

Using Google Sheets

Copy your data into, for example, columns A and B. In an empty cell, you can use the SLOPE function to calculate the slope.

=SLOPE(B:B, A:A)

We can also use the INTERCEPT function to find the intercept of our line.

=INTERCEPT(B:B, A:A)

The intercept is –173.513, and the slope is 4.8273; the equation of the best fit line is ŷ = –173.51 + 4.83x

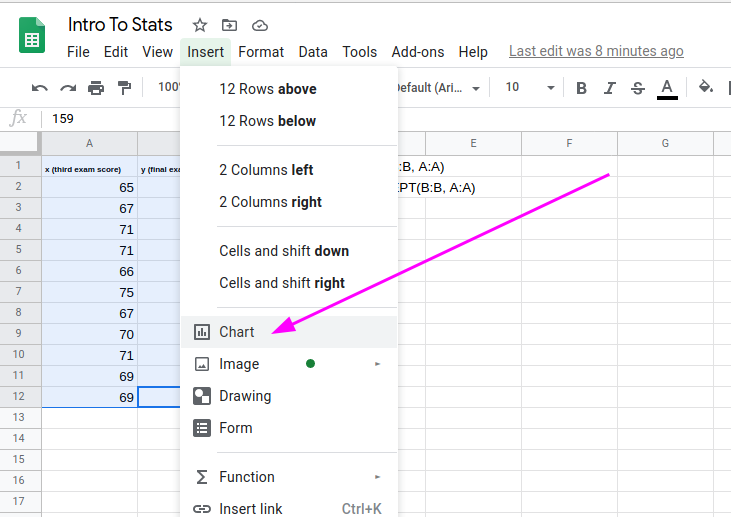

Graphing the Scatterplot and Regression Line

- Select columns A & B

- From the Insert menu, select Chart

- In the sidebar pop-up, make sure Scatter chart is selected as the Chart Type.

- Also in the sidebar, Select Customize, and select Trendline under the Series menu.

Besides looking at the scatter plot and seeing that a line seems reasonable, how can you tell if the line is a good predictor? Use the correlation coefficient as another indicator (besides the scatterplot) of the strength of the relationship between x and y.

The correlation coefficient, r, developed by Karl Pearson in the early 1900s, is numerical and provides a measure of strength and direction of the linear association between the independent variable x and the dependent variable y.

The correlation coefficient is calculated as

$$r=\frac{n \sum(xy)-\left( \sum x \right)\left( \sum y \right)}{\sqrt{\left[ n\sum x^2-\left( \sum x \right)^2 \right] \left[ n \sum y^2 – \left( \sum y \right)^2 \right]}}$$

where n = the number of data points.

Using Google Sheets

=CORREL(B:B, A:A)

If you suspect a linear relationship between x and y, then r can measure how strong the linear relationship is.

What the VALUE of r tells us:

- The value of r is always between –1 and +1: –1 ≤ r ≤ 1.

- The size of the correlation r indicates the strength of the linear relationship between x and y. Values of r close to –1 or to +1 indicate a stronger linear relationship between x and y.

- If r = 0 there is likely no linear correlation. It is important to view the scatterplot, however, because data that exhibit a curved or horizontal pattern may have a correlation of 0.

- If r = 1, there is perfect positive correlation. If r = –1, there is perfect negative correlation. In both these cases, all of the original data points lie on a straight line. Of course,in the real world, this will not generally happen.

What the SIGN of r tells us

- A positive value of r means that when x increases, y tends to increase and when x decreases, y tends to decrease (positive correlation).

- A negative value of r means that when x increases, y tends to decrease and when x decreases, y tends to increase (negative correlation).

- The sign of r is the same as the sign of the slope, b, of the best-fit line.

Note

The Coefficient of Determination

The variable r2 is called thecoefficient of determination and is the square of the correlation coefficient, but is usually stated as a percent, rather than in decimal form. It has an interpretation in the context of the data:

- $r^2$, when expressed as a percent, represents the percent of variation in the dependent (predicted) variable y that can be explained by variation in the independent (explanatory) variable x using the regression (best-fit) line.

- $1-r^2$, when expressed as a percentage, represents the percent of variation in y that is NOT explained by variation in x using the regression line. This can be seen as the scattering of the observed data points about the regression line.

Consider the third exam/final exam example introduced in the previous section

- The line of best fit is: ŷ = –173.51 + 4.83x

- The correlation coefficient is r = 0.6631

- The coefficient of determination is r2 = 0.66312 = 0.4397

- Interpretation of r2 in the context of this example:

- Approximately 44% of the variation (0.4397 is approximately 0.44) in the final-exam grades can be explained by the variation in the grades on the third exam, using the best-fit regression line.

- Therefore, approximately 56% of the variation (1 – 0.44 = 0.56) in the final exam grades can NOT be explained by the variation in the grades on the third exam, using the best-fit regression line. (This is seen as the scattering of the points about the line.)