16.9: The Bernoulli-Laplace Chain

- Page ID

- 10296

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)Basic Theory

Introduction

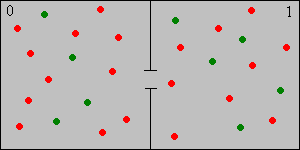

The Bernoulli-Laplace chain, named for Jacob Bernoulli and Pierre Simon Laplace, is a simple discrete model for the diffusion of two incompressible gases between two containers. Like the Ehrenfest chain, it can also be formulated as a simple ball and urn model. Thus, suppose that we have two urns, labeled 0 and 1. Urn 0 contains \( j \) balls and urn 1 contains \( k \) balls, where \( j, \, k \in \N_+ \). Of the \( j + k \) balls, \( r \) are red and the remaining \( j + k - r \) are green. Thus \( r \in \N_+ \) and \( 0 \lt r \lt j + k \). At each discrete time, independently of the past, a ball is selected at random from each urn and then the two balls are switched. The balls of different colors correspond to molecules of different types, and the urns are the containers. The incompressible property is reflected in the fact that the number of balls in each urn remains constant over time.

Let \( X_n \) denote the number of red balls in urn 1 at time \( n \in \N \). Then

- \( k - X_n \) is the number of green balls in urn 1 at time \( n \).

- \( r - X_n \) is the number of red balls in urn 0 at time \( n \).

- \( j - r + X_n \) is the number of green balls in urn 0 at time \( n \).

\( \bs{X} = (X_0, X_1, X_2, \ldots) \) is a discrete-time Markov chain with state space \( S = \{\max\{0, r - j\}, \ldots, \min\{k,r\}\} \) and with transition matrix \( P \) given by \[ P(x, x - 1) = \frac{(j - r + x) x}{j k}, \; P(x, x) = \frac{(r - x) x + (j - r + x)(k - x)}{j k}, \; P(x, x + 1) = \frac{(r - x)(k - x)}{j k}; \quad x \in S \]

Proof

For the state space, note from the previous result that the number of red balls \( x \) in urn 1 must satisfy the inequalities \( x \ge 0 \), \( x \le k \), \( x \le r \), and \( x \ge r - j \). The Markov property is clear from the model. For the transition probabilities, note that to go from state \( x \) to state \(x - 1 \) we must select a green ball from urn 0 and a red ball from urn 1. The probabilities of these events are \( (j - r + x) / j \) and \( x / k \) for \( x \) and \( x - 1 \) in \( S \), and the events are independent. Similarly, to go from state \( x \) to state \( x + 1 \) we must select a red ball from urn 0 and a green ball from urn 1. The probabilities of these events are \( (r - x) / j \) and \( (k - x) / k \) for \( x \) and \( x + 1 \) in \( S \), and the events are independent. Finally, to go from state \( x \) back to state \( x \), we must select a red ball from both urns or a green ball from both urns. Of course also, \( P(x, x) = 1 - P(x, x - 1) - P(x, x + 1) \).

This is a fairly complicated model, simply because of the number of parameters. Interesting special cases occur when some of the parameters are the same.

Consider the special case \( j = k \), so that each urn has the same number of balls. The state space is \( S = \{\max\{0, r - k\}, \ldots, \min\{k,r\}\} \) and the transition probability matrix is \[ P(x, x - 1) = \frac{(k - r + x) x}{k^2}, \; P(x, x) = \frac{(r - x) x + (k - r + x)(k - x)}{k^2}, \; P(x, x + 1) = \frac{(r - x)(k - x)}{k^2}; \quad x \in S \]

Consider the special case \( r = j \), so that the number of red balls is the same as the number of balls in urn 0. The state space is \( S = \{0, \ldots, \min\{j, k\}\} \) and the transition probability matrix is \[ P(x, x - 1) = \frac{x^2}{j k}, \; P(x, x) = \frac{x (j + k - 2 x)}{j k}, \; P(x, x + 1) = \frac{(j - x)(k - x)}{j k}; \quad x \in S \]

Consider the special case \( r = k \), so that the number of red balls is the same as the number of balls in urn 1. The state space is \( S = \{\max\{0, k - j\}, \ldots, k\} \) and the transition probability matrix is \[ P(x, x - 1) = \frac{(j - k + x) x}{j k}, \; P(x, x) = \frac{(k - x)(j - k + 2 x)}{j k}, \; P(x, x + 1) = \frac{(k - x)^2}{j k}; \quad x \in S \]

Consider the special case \( j = k = r \), so that each urn has the same number of balls, and this is also the number of red balls. The state space is \( S = \{0, 1, \ldots, k\} \) and the transition probability matrix is \[ P(x, x - 1) = \frac{x^2}{k^2}, \; P(x, x) = \frac{2 x (k - x)}{k^2}, \; P(x, x + 1) = \frac{(k - x)^2}{k^2}; \quad x \in S \]

Run the simulation of the Bernoulli-Laplace experiment for 10000 steps and for various values of the parameters. Note the limiting behavior of the proportion of time spent in each state.

Invariant and Limiting Distributions

The Bernoulli-Laplace chain is irreducible.

Proof

Note that \( P(x, x - 1) \gt 0 \) whenever \( x, \, x - 1 \in S \), and \( P(x, x + 1) \gt 0 \) whenever \( x, \, x + 1 \in S \). Hence every state leads to every other state so the chain is irreducible.

Except in the trivial case \( j = k = r = 1 \), the Bernoulli-Laplace chain aperiodic.

Proof

Consideration of the state probabilities shows that except when \( j = k = r = 1 \), the chain has a state \( x \) with \( P(x, x) \gt 0 \), so state \( x \) is aperiodic. Since the chain is irreducible by the previous result, all states are aperiodic.

The invariant distribution is the hypergeometric distribution with population parameter \( j + k \), sample parameter \( k \), and type parameter \( r \). The probability density function is \[ f(x) = \frac{\binom{r}{x} \binom{j + k - r}{k - x}}{\binom{j + k}{k}}, \quad x \in S \]

Proof

A direct proof that \( (f P)(x) = f(x) \) for all \( x \in S \) is straightforward but tedious. A better proof follows from the reversibility condition below.

Thus, the invariant distribution corresponds to selecting a sample of \( k \) balls at random and without replacement from the \( j + k \) balls and placing them in urn 1. The mean and variance of the invariant distribution are \[ \mu = k \frac{r}{j + k}, \; \sigma^2 = k \frac{r}{j + k} \frac{j + k - r}{j + k} \frac{j}{j + k -1} \]

The mean return time to each state \( x \in S \) is \[ \mu(x) = \frac{\binom{j + k}{k}}{\binom{r}{x} \binom{j + k - r}{k - x}} \]

Proof

This follows from the general theory and the invariant distribution above.

\( P^n(x, y) \to f(y) = \binom{r}{y} \binom{r + k - r}{k - y} \big/ \binom{j + k}{k} \) as \( n \to \infty \) for \( (x, y) \in S^2 \).

Proof

This follows from the general theory and the invariant distribution above.

In the simulation of the Bernoulli-Laplace experiment, vary the parameters and note the shape and location of the limiting hypergeometric distribution. For selected values of the parameters, run the simulation for 10000 steps and and note the limiting behavior of the proportion of time spent in each state.

Reversibility

The Bernoulli-Laplace chain is reversible.

Proof

Let \[ g(x) = \binom{r}{x} \binom{j + k - r}{k - x}, \quad x \in S \] It suffices to show the reversibility condition \( g(x) P(x, y) = g(y) P(y, x) \) for all \( x, \, y \in S \). It then follows that \( \bs{X} \) is reversible and that \( g \) is invariant for \( \bs{X} \). For \( x \in S \) and \( y = x - 1 \in S \), the left and right sides of the reversibility condition reduce to \[ \frac{1}{j k}\frac{r!}{(x - 1)! (r - x)!} \frac{(j + k - r)!}{(k - x)! (j - r + x - 1)!} \] For \( x \in S \) and \( y = x + 1 \in S \), the left and right sides of the reversibility condition reduce to \[ \frac{1}{j k} \frac{r!}{x! (r - x - 1)!} \frac{(j + k - r)!}{(k - x - 1)! (j - r + x)!} \] For all other values of \( x, \, y \in S \), the reversibility condition is trivially satisfied. The hypergeometric PDF \( f \) above is simply \( g \) normalized, so this proves that \( f \) is also invariant.

Run the simulation of the Bernoulli-Laplace experiment 10,000 time steps for selected values of the parameters, and with initial state 0. Note that at first, you can see the arrow of time

. After a long period, however, the direction of time is no longer evident.

Computational Exercises

Consider the Bernoulli-Laplace chain with \( j = 10 \), \( k = 5 \), and \( r = 4 \). Suppose that \( X_0 \) has the uniform distribution on \( S \). Explicitly give each of the following:

- The state space \( S \)

- The transition matrix \( P \).

- The probability density function, mean and variance of \( X_1 \).

- The probability density function, mean and variance of \( X_2 \).

- The probability density function, mean and variance of \( X_3 \).

Answer

- \( S = \{0, 1, 2, 3, 4\} \)

- \( P = \frac{1}{50} \left[ \begin{matrix} 30 & 20 & 0 & 0 & 0 \\ 7 & 31 & 12 & 0 & 0 \\ 0 & 16 & 28 & 6 & 0 \\ 0 & 0 & 27 & 21 & 2 \\ 0 & 0 & 0 & 40 & 10 \end{matrix} \right] \)

- \( f_1 = \frac{1}{250} (37, 67, 67, 67, 12), \mu_1 = \frac{9}{5}, \sigma_1^2 = \frac{32}{25} \)

- \( f_2 = \frac{1}{12\,500} (1579, 3889, 4489, 2289, 254), \mu_2 = \frac{83}{50}, \sigma_2^2 = \frac{2413}{2500} \)

- \( f_3 = \frac{1}{625\,000} (74\,593, 223\,963, 234\,163, 85\,163, 7118), \mu_3 = \frac{781}{500}, \sigma_3^2 = \frac{206\,427}{250\,000} \)

Consider the Bernoulli-Laplace chain with \( j = k = 10 \) and \( r = 6 \). Give each of the following explicitly:

- The state space \( S \)

- The transition matrix \( P \)

- The invariant probability density function.

Answer

- \( S = \{0, 1, 2, 3, 4, 5, 6\} \)

- \( P = \frac{1}{100} \left[ \begin{matrix} 40 & 60 & 0 & 0 & 0 & 0 & 0 \\ 5 & 50 & 45 & 0 & 0 & 0 & 0 \\ 0 & 12 & 56 & 32 & 0 & 0 & 0 \\ 0 & 0 & 21 & 58 & 21 & 0 & 0 \\ 0 & 0 & 0 & 32 & 56 & 12 & 0 \\ 0 & 0 & 0 & 0 & 45 & 50 & 5 \\ 0 & 0 & 0 & 0 & 0 & 60 & 40 \end{matrix} \right]\)

- \( f = \frac{1}{1292}(7, 84, 315, 480, 315, 84, 7) \)