11.2: Absorbing Markov Chains**

- Page ID

- 3173

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\( \newcommand{\dsum}{\displaystyle\sum\limits} \)

\( \newcommand{\dint}{\displaystyle\int\limits} \)

\( \newcommand{\dlim}{\displaystyle\lim\limits} \)

\( \newcommand{\id}{\mathrm{id}}\) \( \newcommand{\Span}{\mathrm{span}}\)

( \newcommand{\kernel}{\mathrm{null}\,}\) \( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\) \( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\) \( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\id}{\mathrm{id}}\)

\( \newcommand{\Span}{\mathrm{span}}\)

\( \newcommand{\kernel}{\mathrm{null}\,}\)

\( \newcommand{\range}{\mathrm{range}\,}\)

\( \newcommand{\RealPart}{\mathrm{Re}}\)

\( \newcommand{\ImaginaryPart}{\mathrm{Im}}\)

\( \newcommand{\Argument}{\mathrm{Arg}}\)

\( \newcommand{\norm}[1]{\| #1 \|}\)

\( \newcommand{\inner}[2]{\langle #1, #2 \rangle}\)

\( \newcommand{\Span}{\mathrm{span}}\) \( \newcommand{\AA}{\unicode[.8,0]{x212B}}\)

\( \newcommand{\vectorA}[1]{\vec{#1}} % arrow\)

\( \newcommand{\vectorAt}[1]{\vec{\text{#1}}} % arrow\)

\( \newcommand{\vectorB}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\( \newcommand{\vectorC}[1]{\textbf{#1}} \)

\( \newcommand{\vectorD}[1]{\overrightarrow{#1}} \)

\( \newcommand{\vectorDt}[1]{\overrightarrow{\text{#1}}} \)

\( \newcommand{\vectE}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash{\mathbf {#1}}}} \)

\( \newcommand{\vecs}[1]{\overset { \scriptstyle \rightharpoonup} {\mathbf{#1}} } \)

\(\newcommand{\longvect}{\overrightarrow}\)

\( \newcommand{\vecd}[1]{\overset{-\!-\!\rightharpoonup}{\vphantom{a}\smash {#1}}} \)

\(\newcommand{\avec}{\mathbf a}\) \(\newcommand{\bvec}{\mathbf b}\) \(\newcommand{\cvec}{\mathbf c}\) \(\newcommand{\dvec}{\mathbf d}\) \(\newcommand{\dtil}{\widetilde{\mathbf d}}\) \(\newcommand{\evec}{\mathbf e}\) \(\newcommand{\fvec}{\mathbf f}\) \(\newcommand{\nvec}{\mathbf n}\) \(\newcommand{\pvec}{\mathbf p}\) \(\newcommand{\qvec}{\mathbf q}\) \(\newcommand{\svec}{\mathbf s}\) \(\newcommand{\tvec}{\mathbf t}\) \(\newcommand{\uvec}{\mathbf u}\) \(\newcommand{\vvec}{\mathbf v}\) \(\newcommand{\wvec}{\mathbf w}\) \(\newcommand{\xvec}{\mathbf x}\) \(\newcommand{\yvec}{\mathbf y}\) \(\newcommand{\zvec}{\mathbf z}\) \(\newcommand{\rvec}{\mathbf r}\) \(\newcommand{\mvec}{\mathbf m}\) \(\newcommand{\zerovec}{\mathbf 0}\) \(\newcommand{\onevec}{\mathbf 1}\) \(\newcommand{\real}{\mathbb R}\) \(\newcommand{\twovec}[2]{\left[\begin{array}{r}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\ctwovec}[2]{\left[\begin{array}{c}#1 \\ #2 \end{array}\right]}\) \(\newcommand{\threevec}[3]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\cthreevec}[3]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \end{array}\right]}\) \(\newcommand{\fourvec}[4]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\cfourvec}[4]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \end{array}\right]}\) \(\newcommand{\fivevec}[5]{\left[\begin{array}{r}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\cfivevec}[5]{\left[\begin{array}{c}#1 \\ #2 \\ #3 \\ #4 \\ #5 \\ \end{array}\right]}\) \(\newcommand{\mattwo}[4]{\left[\begin{array}{rr}#1 \amp #2 \\ #3 \amp #4 \\ \end{array}\right]}\) \(\newcommand{\laspan}[1]{\text{Span}\{#1\}}\) \(\newcommand{\bcal}{\cal B}\) \(\newcommand{\ccal}{\cal C}\) \(\newcommand{\scal}{\cal S}\) \(\newcommand{\wcal}{\cal W}\) \(\newcommand{\ecal}{\cal E}\) \(\newcommand{\coords}[2]{\left\{#1\right\}_{#2}}\) \(\newcommand{\gray}[1]{\color{gray}{#1}}\) \(\newcommand{\lgray}[1]{\color{lightgray}{#1}}\) \(\newcommand{\rank}{\operatorname{rank}}\) \(\newcommand{\row}{\text{Row}}\) \(\newcommand{\col}{\text{Col}}\) \(\renewcommand{\row}{\text{Row}}\) \(\newcommand{\nul}{\text{Nul}}\) \(\newcommand{\var}{\text{Var}}\) \(\newcommand{\corr}{\text{corr}}\) \(\newcommand{\len}[1]{\left|#1\right|}\) \(\newcommand{\bbar}{\overline{\bvec}}\) \(\newcommand{\bhat}{\widehat{\bvec}}\) \(\newcommand{\bperp}{\bvec^\perp}\) \(\newcommand{\xhat}{\widehat{\xvec}}\) \(\newcommand{\vhat}{\widehat{\vvec}}\) \(\newcommand{\uhat}{\widehat{\uvec}}\) \(\newcommand{\what}{\widehat{\wvec}}\) \(\newcommand{\Sighat}{\widehat{\Sigma}}\) \(\newcommand{\lt}{<}\) \(\newcommand{\gt}{>}\) \(\newcommand{\amp}{&}\) \(\definecolor{fillinmathshade}{gray}{0.9}\)The subject of Markov chains is best studied by considering special types of Markov chains. The first type that we shall study is called an absorbing Markov chain.

A state \(s_i\) of a Markov chain is called absorbing if it is impossible to leave it (i.e., \(p_{ii} = 1\)). A Markov chain is if it has at least one absorbing state, and if from every state it is possible to go to an absorbing state (not necessarily in one step).

In an absorbing Markov chain, a state which is not absorbing is called transient

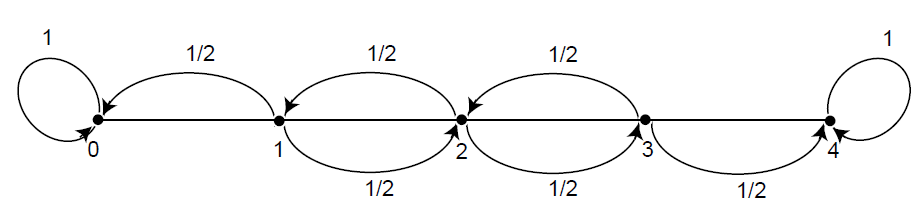

Drunkard’s Walk

A man walks along a four-block stretch of Park Avenue (see Figure 11.1.3). If he is at corner 1, 2, or 3, then he walks to the left or right with equal probability. He continues until he reaches corner 4, which is a bar, or corner 0, which is his home. If he reaches either home or the bar, he stays there.

We form a Markov chain with states 0, 1, 2, 3, and 4. States 0 and 4 are absorbing states. The transition matrix is then

\[P= \begin{matrix} & \begin{matrix}0&&1&&2&&3&&4\end{matrix} \\\begin{matrix}0\\1\\2\\3\\4\end{matrix} & \begin{pmatrix}1 & 0 & 0 & 0 & 0 \\

1 / 2 & 0 & 1 / 2 & 0 & 0 \\

0 & 1 / 2 & 0 & 1 / 2 & 0 \\

0 & 0 & 1 / 2 & 0 & 1 / 2 \\

0 & 0 & 0 & 0 & 1\end{pmatrix}\\\end{matrix}\]

The states 1, 2, and 3 are transient states, and from any of these it is possible to reach the absorbing states 0 and 4. Hence the chain is an absorbing chain. When a process reaches an absorbing state, we shall say that it is absorbed.

The most obvious question that can be asked about such a chain is: What is the probability that the process will eventually reach an absorbing state? Other interesting questions include: (a) What is the probability that the process will end up in a given absorbing state? (b) On the average, how long will it take for the process to be absorbed? (c) On the average, how many times will the process be in each transient state? The answers to all these questions depend, in general, on the state from which the process starts as well as the transition probabilities.

Canonical Form

Consider an arbitrary absorbing Markov chain. Renumber the states so that the transient states come first. If there are \(r\) absorbing states and \(t\) transient states, the transition matrix will have the following canonical form

Figure \(\PageIndex{1}\): Drunkard's walk

\[\mathbf{P}=\begin{gathered}

\\

\text { TR. } \\

\text { ABS. }

\end{gathered}\left(\begin{array}{c|c}

\text { TR. } & \text { ABS. } \\

\mathbf{Q} & \mathbf{R} \\

\hline \mathbf{0} & \mathbf{I}

\end{array}\right)\]

Here \(\mathbf{I}\) is an \(r\)-by-\(r\) indentity matrix, \(\mathbf{0}\) is an \(r\)-by-\(t\) zero matrix, \(\mathbf{R}\) is a nonzero \(t\)-by-\(r\) matrix, and \(\mathbf{Q}\) is an \(t\)-by-\(t\) matrix. The first \(t\) states are transient and the last \(r\) states are absorbing.

In Section 11.1, we saw that the entry \(p_{ij}^{(n)}\) of the matrix \(\mathbf{P}^n\) is the probability of being in the state \(s_j\) after \(n\) steps, when the chain is started in state \(s_i\). A standard matrix algebra argument shows that \(\mathbf{P}^n\) is of the form

\[\mathbf{P}^n=\begin{gathered}

\\

\text { TR. } \\

\text { ABS. }

\end{gathered}\left(\begin{array}{c|c}

\text { TR. } & \text { ABS. } \\

\mathbf{Q}^n & * \\

\hline \mathbf{0} & \mathbf{I}

\end{array}\right)\]

where the asterisk \(*\) stands for the \(t\)-by-\(r\) matrix in the upper right-hand corner of \(\mathbf{P}^n.\) (This submatrix can be written in terms of \(\mathbf{Q}\) and \(\mathbf{R}\), but the expression is complicated and is not needed at this time.) The form of \(\mathbf{P}^n\) shows that the entries of \(\mathbf{Q}^n\) give the probabilities for being in each of the transient states after \(n\) steps for each possible transient starting state. For our first theorem we prove that the probability of being in the transient states after \(n\) steps approaches zero. Thus every entry of \(\mathbf{ Q}^n\) must approach zero as \(n\) approaches infinity (i.e, \(\mathbf{Q}^n \to \mathbf{ 0}\)).

Probability of Absorption

In an absorbing Markov chain, the probability that the process will be absorbed is 1 (i.e., \(\mathbf{Q}^n \to \mathbf{0}\) as \(n \to \infty\)).

- Proof

-

From each nonabsorbing state \(s_j\) it is possible to reach an absorbing state. Let \(m_j\) be the minimum number of steps required to reach an absorbing state, starting from \(s_j\). Let \(p_j\) be the probability that, starting from \(s_j\), the process will not reach an absorbing state in \(m_j\) steps. Then \(p_j <1\). Let \(m\) be the largest of the \(m_j\) and let \(p\) be the largest of \(p_j\). The probability of not being absorbed in \(m\) steps is less than or equal to \(p\), in \(2m\) steps less than or equal to \(p^2\), etc. Since \(p<1\) these probabilities tend to 0. Since the probability of not being absorbed in \(n\) steps is monotone decreasing, these probabilities also tend to 0, hence \(\lim_{n \rightarrow \infty } \mathbf{Q}^n = 0.\)

The Fundamental Matrix

For an absorbing Markov chain the matrix I - Q has an inverse \(\mathbf{N}\) and \(\mathbf{N}=\mathbf{I}+\mathbf{Q}+\mathbf{Q}^2+\cdots\). The \(i j\)-entry \(n_{i j}\) of the matrix \(\mathbf{N}\) is the expected number of times the chain is in state \(s_j\), given that it starts in state \(s_i\). The initial state is counted if \(i=j\).

- Proof

-

Let \((\mathbf{I} - \mathbf{Q})\mathbf{x}~=~0;\) that is \(\mathbf{x}~=~\mathbf{Q}\mathbf{x}.\) Then, iterating this we see that \(\mathbf{x}~=~\mathbf{Q}^{n}\mathbf x.\) Since \(\mathbf{Q}^{n} \rightarrow \mathbf{0}\), we have \(\mathbf{Q}^n\mathbf{x} \rightarrow \mathbf{0}\), so \(\mathbf{x}~=~\mathbf{0}\). Thus \((\mathbf{I} - \mathbf{Q})^{-1}~=~\mathbf{N}\) exists. Note next that

\[(\mathbf{I} - \mathbf{Q}) (\mathbf{I} + \mathbf{Q} + \mathbf{Q}^2 + \cdots + \mathbf{Q}^n) = \mathbf{I} - \mathbf{Q}^{n + 1}\ .\]

Thus multiplying both sides by \(\mathbf{N}\) gives \[\mathbf{I} + \mathbf{Q} + \mathbf{Q}^2 + \cdots + \mathbf{Q}^n = \mathbf{N} (\mathbf{I} - \mathbf{Q}^{n + 1})\ .\]

Letting \(n\) tend to infinity we have

\[\mathbf{N} = \mathbf{I} + \mathbf{Q} + \mathbf{Q}^2 + \cdots\ .\]

Let \(s_i\) and \(s_j\) be two transient states, and assume throughout the remainder of the proof that \(i\) and \(j\) are fixed. Let \(X^{(k)}\) be a random variable which equals 1 if the chain is in state \(s_j\) after \(k\) steps, and equals 0 otherwise. For each \(k\), this random variable depends upon both \(i\) and \(j\); we choose not to explicitly show this dependence in the interest of clarity. We have

\[P(X^{(k)} = 1) = q_{ij}^{(k)}\ ,\] and \[P(X^{(k)} = 0) = 1 - q_{ij}^{(k)}\ ,\]

where \(q_{ij}^{(k)}\) is the \(ij\)th entry of \(\mathbf{Q}^k\). These equations hold for \(k = 0\) since \(\mathbf{Q}^0 = \mathbf{I}\). Therefore, since \(X^{(k)}\) is a 0-1 random variable, \(E(X^{(k)}) = q_{ij}^{(k)}\).

The expected number of times the chain is in state \(s_j\) in the first \(n\) steps, given that it starts in state \(s_i\), is clearly

\[E\Bigl(X^{(0)} + X^{(1)} + \cdots + X^{(n)} \Bigr) = q_{ij}^{(0)} + q_{ij}^{(1)} + \cdots + q_{ij}^{(n)}\ .\]

Letting \(n\) tend to infinity we have

\[E\Bigl(X^{(0)} + X^{(1)} + \cdots \Bigr) = q_{ij}^{(0)} + q_{ij}^{(1)} + \cdots = n_{ij} \ .\]

For an absorbing Markov chain \(\mathbf{P}\), the matrix \(\mathbf{N} = (\mathbf{I} - \mathbf{Q})^{-1}\) is called the fundamental matrix for \(\mathbf{P}\). The entry \(n_{ij}\) of \(\mathbf{N}\) gives the expected number of times that the process is in the transient state \(s_j\) if it is started in the transient state \(s_i\).

(Example continued) In the Drunkard’s Walk example, the transition matrix in canonical form is

\[P= \begin{matrix} & \begin{matrix}0&&1&&2&&3&&4\end{matrix} \\\begin{matrix}0\\1\\2\\3\\4\end{matrix} & \left(\begin{array}{ccc|cc}

0 & 1 / 2 & 0 & 1 / 2 & 0 \\

1 / 2 & 0 & 1 / 2 & 0 & 0 \\

0 & 1 / 2 & 0 & 0 & 1 / 2 \\

\hline 0 & 0 & 0 & 1 & 0 \\

0 & 0 & 0 & 0 & 1

\end{array}\right)\\\end{matrix}\]

From this we see that the matrix \(\mathbf{Q}\) is

\[ \mathbf{Q}=\left(\begin{array}{ccc}

0 & 1 / 2 & 0 \\

1 / 2 & 0 & 1 / 2 \\

0 & 1 / 2 & 0

\end{array}\right) \]

and

\[\mathbf{I} - \mathbf{Q} = \pmatrix{ 1 & -1/2 & 0 \cr -1/2 & 1 & -1/2 \cr 0 & -1/2 & 1 \cr}\ .\]

Computing \((\mathbf{I} - \mathbf{Q})^{-1}\), we find

\[\mathbf{N}=(\mathbf{I}-\mathbf{Q})^{-1}=\begin{array}{c}

1 \\

2 \\

3

\end{array}\left(\begin{array}{ccc}

3 / 2 & 1 & 1 / 2 \\

1 & 2 & 1 \\

1 / 2 & 1 & 3 / 2

\end{array}\right)\]

From the middle row of \(\mathbf{N}\), we see that if we start in state 2, then the expected number of times in states 1, 2, and 3 before being absorbed are 1, 2, and 1.

Time to Absorption

We now consider the question: Given that the chain starts in state \(s_i\), what is the expected number of steps before the chain is absorbed? The answer is given in the next theorem.

Let \(t_i\) be the expected number of steps before the chain is absorbed, given that the chain starts in state \(s_i\), and let \(\mathbf{t}\) be the column vector whose \(i\)th entry is \(t_i\). Then

\[\mathbf{t} = \mathbf{N}\mathbf{c}\ ,\]

where \(\mathbf{c}\) is a column vector all of whose entries are 1.

- Proof

-

If we add all the entries in the \(i\)th row of \(\mathbf{N}\), we will have the expected number of times in any of the transient states for a given starting state \(s_i\), that is, the expected time required before being absorbed. Thus, \(t_i\) is the sum of the entries in the \(i\)th row of \(\mathbf{N}\). If we write this statement in matrix form, we obtain the theorem.

Absorption Probabilities

Let \(b_{ij}\) be the probability that an absorbing chain will be absorbed in the absorbing state \(s_j\) if it starts in the transient state \(s_i\). Let \(\mathbf{B}\) be the matrix with entries \(b_{ij}\). Then \(\mathbf{B}\) is an \(t\)-by-\(r\) matrix, and

\[\mathbf{B} = \mathbf{N} \mathbf{R}\ ,\]

where \(\mathbf{N}\) is the fundamental matrix and \(\mathbf{R}\) is as in the canonical form.

- Proof

-

We have \[\begin{aligned} \mathbf{B}_{ij} &= \sum_n\sum_k q_{ik}^{(n)} r_{kj} \\ &= \sum_k \sum_n q_{ik}^{(n)} r_{kj} \\ &= \sum_k n_{ik}r_{kj} \\ &= (\mathbf{N}\mathbf{R})_{ij}\ .\end{aligned}\] This completes the proof.

Another proof of this is given in Exercise \(\PageIndex{34}\).

(Example \(\PageIndex{2}\) continued) In the Drunkard’s Walk example, we found that

\[N= \begin{matrix} & \begin{matrix}1&&2&&3\end{matrix} \\\begin{matrix}1\\2\\3\end{matrix} & \begin{pmatrix}3/2 & 1 & 1/2 \\

1 & 2 & 1 \\

1/2 & 1 & 3/2 \\

\end{pmatrix}\\\end{matrix}\]

Hence,

\[ \begin{aligned} \mathbf{t} = \mathbf{N}\mathbf{c} &= \pmatrix{ 3/2 & 1 & 1/2 \cr 1 & 2 & 1 \cr 1/2 & 1 & 3/2 \cr } \pmatrix{ 1 \cr 1 \cr 1 \cr } \\ &= \pmatrix{ 3 \cr 4 \cr 3 \cr }\ .\end{aligned} \]

Thus, starting in states 1, 2, and 3, the expected times to absorption are 3, 4, and 3, respectively.

From the canonical form,

\[\mathbf{R}= \begin{matrix} & \begin{matrix}0&&4\end{matrix} \\\begin{matrix}1\\2\\3\end{matrix} & \begin{pmatrix}1/2 & 0 \\

0 & 0 \\

0 & 1/2 \\

\end{pmatrix}\\\end{matrix}\]

Hence,

\[ \mathbf{B}=\mathbf{N R}=\left(\begin{array}{ccc}

3 / 2 & 1 & 1 / 2 \\

1 & 2 & 1 \\

1 / 2 & 1 & 3 / 2

\end{array}\right) \cdot\left(\begin{array}{cc}

1 / 2 & 0 \\

0 & 0 \\

0 & 1 / 2

\end{array}\right) \]

\[\mathbf{R}= \begin{matrix} & \begin{matrix}0&&4\end{matrix} \\\begin{matrix}1\\2\\3\end{matrix} & \begin{pmatrix}3/4 & 1/4 \\

1/2 & 1/2 \\

1/4 & 3/4 \\

\end{pmatrix}\\\end{matrix}\]

Here the first row tells us that, starting from state \(1\), there is probability 3/4 of absorption in state \(0\) and 1/4 of absorption in state \(4\).

Computation

The fact that we have been able to obtain these three descriptive quantities in matrix form makes it very easy to write a computer program that determines these quantities for a given absorbing chain matrix.

The program AbsorbingChain calculates the basic descriptive quantities of an absorbing Markov chain.

We have run the program AbsorbingChain for the example of the drunkard’s walk (Example [exam 11.2.1]) with 5 blocks. The results are as follows:

\[\mathbf{Q}=\begin{aligned}

& \\

& 1 \\

& 2 \\

& 3 \\

& 4

\end{aligned}\left(\begin{array}{cccc}

1 & 2 & 3 & 4 \\

.00 & .50 & .00 & .00 \\

.50 & .00 & .50 & .00 \\

.00 & .50 & .00 & .50 \\

.00 & .00 & .50 & .00

\end{array}\right) ;\]

\[ \mathbf{R}= \begin{matrix} & \begin{matrix}0&&5\end{matrix} \\\begin{matrix}1\\2\\3\\4 \end{matrix} & \begin{pmatrix}

.50 & .00 \\

.00 & .00 \\

.00 & .00 \\

.00 & .50 \\

\end{pmatrix}\\\end{matrix}\]

\[\mathbf{N}= \begin{matrix} & \begin{matrix}1&&2&&3&&4\end{matrix} \\\begin{matrix}1\\2\\3\\4\end{matrix} & \begin{pmatrix}

1.60 & 1.20 & .80 & .40 \\

1.20 & 2.40 & 1.60 & .80 \\

.80 & 1.60 & 2.40 & 1.20 \\

.40 & .80 & 1.20 & 1.60

\end{pmatrix}\\\end{matrix}\]

\[ \mathbf{t}= \begin{matrix} & \\\begin{matrix}1\\2\\3\\4 \end{matrix} & \begin{pmatrix}

4.00 \\

6.00 \\

6.00 \\

4.00\\

\end{pmatrix}\\\end{matrix}\]

\[ \mathbf{B}= \begin{matrix} & \begin{matrix}0&&5\end{matrix} \\\begin{matrix}1\\2\\3\\4 \end{matrix} & \begin{pmatrix}

.80 & .20 \\

.60 & .40 \\

.40 & .60 \\

.20 & .80 \\

\end{pmatrix}\\\end{matrix}\]

Note that the probability of reaching the bar before reaching home, starting at \(x\), is \(x/5\) (i.e., proportional to the distance of home from the starting point). (See Exercise \(\PageIndex{23}\)

Exercises

Exercise \(\PageIndex{1}\)

In Example 11.1.4, for what values of \(a\) and \(b\) do we obtain an absorbing Markov chain?

Exercise \(\PageIndex{2}\)

Show that Example 11.1.7 is an absorbing Markov chain.

Exercise \(\PageIndex{3}\)

Which of the genetics examples (Examples 11.1.9, 11.1.10, and 11.1.11) are absorbing?

Exercise \(\PageIndex{4}\)

Find the fundamental matrix \(\mathbf{N}\) for Example 11.1.10.

Exercise \(\PageIndex{5}\)

For Example 11.1.11, verify that the following matrix is the inverse of \(\mathbf{I} - \mathbf{Q}\) and hence is the fundamental matrix \(\mathbf{N}\). \[\mathbf{N} = \pmatrix{ 8/3 & 1/6 & 4/3 & 2/3 \cr 4/3 & 4/3 & 8/3 & 4/3 \cr 4/3 & 1/3 & 8/3 & 4/3 \cr 2/3 & 1/6 & 4/3 & 8/3 \cr}\ .\] Find \(\mathbf{N} \mathbf{c}\) and \(\mathbf{N} \mathbf{R}\). Interpret the results.

Exercise \(\PageIndex{6}\)

In the Land of Oz example (Example 11.1.1), change the transition matrix by making R an absorbing state. This gives

\[\mathbf{N}= \begin{matrix} & \begin{matrix}R&&N&&S\end{matrix} \\\begin{matrix}R\\N\\S\end{matrix} & \begin{pmatrix}

1 & 0 & 0 \\

1/2 & 0 & 1/2 \\

1/4&1/4&1/2

\end{pmatrix}\\\end{matrix}\]

Find the fundamental matrix \(\mathbf{N}\), and also \(\mathbf{Nc}\) and \(\mathbf{NR}\). Interpret the results.

Exercise \(\PageIndex{7}\)

In Example 11.1.8, make states 0 and 4 into absorbing states. Find the fundamental matrix \(\mathbf{N}\), and also \(\mathbf{Nc}\) and \(\mathbf{NR}\), for the resulting absorbing chain. Interpret the results.

Exercise \(\PageIndex{8}\)

In Example \(\PageIndex{1}\) (Drunkard’s Walk) of this section, assume that the probability of a step to the right is 2/3, and a step to the left is 1/3. Find \(\mathbf{N},~\mathbf{N}\mathbf{c}\), and \(\mathbf{N}\mathbf{R}\). Compare these with the results of Example \(\PageIndex{3}\).

Exercise \(\PageIndex{9}\)

A process moves on the integers 1, 2, 3, 4, and 5. It starts at 1 and, on each successive step, moves to an integer greater than its present position, moving with equal probability to each of the remaining larger integers. State five is an absorbing state. Find the expected number of steps to reach state five.

Exercise \(\PageIndex{10}\)

Using the result of Exercise \(\PageIndex{9}\), make a conjecture for the form of the fundamental matrix if the process moves as in that exercise, except that it now moves on the integers from 1 to \(n\). Test your conjecture for several different values of \(n\). Can you conjecture an estimate for the expected number of steps to reach state \(n\), for large \(n\)? (See Exercise \(\PageIndex{11}\) for a method of determining this expected number of steps.)

Exercise \(\PageIndex{11}\)

Let \(b_k\) denote the expected number of steps to reach \(n\) from \(n-k\), in the process described in Exercise \(\PageIndex{9}\).

- Define \(b_0 = 0\). Show that for \(k > 0\), we have \[b_k = 1 + \frac 1k \bigl(b_{k-1} + b_{k-2} + \cdots + b_0\bigr)\ .\]

- Let \[f(x) = b_0 + b_1 x + b_2 x^2 + \cdots\ .\] Using the recursion in part (a), show that \(f(x)\) satisfies the differential equation \[(1-x)^2 y' - (1-x) y - 1 = 0\ .\]

- Show that the general solution of the differential equation in part (b) is \[y = \frac{-\log(1-x)}{1-x} + \frac c{1-x}\ ,\] where \(c\) is a constant.

Use part (c) to show that \[b_k = 1 + \frac 12 + \frac 13 + \cdots + \frac 1k\ .\]

Exercise \(\PageIndex{12}\)

Three tanks fight a three-way duel. Tank A has probability 1/2 of destroying the tank at which it fires, tank B has probability 1/3 of destroying the tank at which it fires, and tank C has probability 1/6 of destroying the tank at which it fires. The tanks fire together and each tank fires at the strongest opponent not yet destroyed. Form a Markov chain by taking as states the subsets of the set of tanks. Find \(\mathbf{N},~\mathbf{N}\mathbf{c}\), and \(\mathbf{N}\mathbf{R}\), and interpret your results. : Take as states ABC, AC, BC, A, B, C, and none, indicating the tanks that could survive starting in state ABC. You can omit AB because this state cannot be reached from ABC.

Exercise \(\PageIndex{13}\)

Smith is in jail and has 3 dollars; he can get out on bail if he has 8 dollars. A guard agrees to make a series of bets with him. If Smith bets \(A\) dollars, he wins \(A\) dollars with probability .4 and loses \(A\) dollars with probability .6. Find the probability that he wins 8 dollars before losing all of his money if

- he bets 1 dollar each time (timid strategy).

- he bets, each time, as much as possible but not more than necessary to bring his fortune up to 8 dollars (bold strategy).

- Which strategy gives Smith the better chance of getting out of jail?

Exercise \(\PageIndex{14}\)

With the situation in Exercise \(\PageIndex{13}\), consider the strategy such that for \(i < 4\), Smith bets \(\min(i,4 - i)\), and for \(i \geq 4\), he bets according to the bold strategy, where \(i\) is his current fortune. Find the probability that he gets out of jail using this strategy. How does this probability compare with that obtained for the bold strategy?

Exercise \(\PageIndex{15}\)

Consider the game of tennis when is reached. If a player wins the next point, he has On the following point, he either wins the game or the game returns to Assume that for any point, player A has probability .6 of winning the point and player B has probability .4 of winning the point.

- Set this up as a Markov chain with state 1: A wins; 2: B wins; 3: advantage A; 4: deuce; 5: advantage B.

- Find the absorption probabilities.

- At deuce, find the expected duration of the game and the probability that B will win.

Exercises \(\PageIndex{16}\) and \(\PageIndex{17}\) concern the inheritance of color-blindness, which is a sex-linked characteristic. There is a pair of genes, g and G, of which the former tends to produce color-blindness, the latter normal vision. The G gene is dominant. But a man has only one gene, and if this is g, he is color-blind. A man inherits one of his mother’s two genes, while a woman inherits one gene from each parent. Thus a man may be of type G or g, while a woman may be type GG or Gg or gg. We will study a process of inbreeding similar to that of Example [exam 11.1.9] by constructing a Markov chain.

Exercise \(\PageIndex{16}\)

List the states of the chain. : There are six. Compute the transition probabilities. Find the fundamental matrix \(\mathbf{N}\), \(\mathbf{N}\mathbf{c}\), and \(\mathbf{N}\mathbf{R}\).

Exercise \(\PageIndex{17}\)

Show that in both Example 11.1.11 and the example just given, the probability of absorption in a state having genes of a particular type is equal to the proportion of genes of that type in the starting state. Show that this can be explained by the fact that a game in which your fortune is the number of genes of a particular type in the state of the Markov chain is a fair game.5

Exercise \(\PageIndex{18}\)

Assume that a student going to a certain four-year medical school in northern New England has, each year, a probability \(q\) of flunking out, a probability \(r\) of having to repeat the year, and a probability \(p\) of moving on to the next year (in the fourth year, moving on means graduating).

- Form a transition matrix for this process taking as states F, 1, 2, 3, 4, and G where F stands for flunking out and G for graduating, and the other states represent the year of study.

- For the case \(q = .1\), \(r = .2\), and \(p = .7\) find the time a beginning student can expect to be in the second year. How long should this student expect to be in medical school?

Find the probability that this beginning student will graduate.

Exercise \(\PageIndex{19}\)

(E. Brown6) Mary and John are playing the following game: They have a three-card deck marked with the numbers 1, 2, and 3 and a spinner with the numbers 1, 2, and 3 on it. The game begins by dealing the cards out so that the dealer gets one card and the other person gets two. A move in the game consists of a spin of the spinner. The person having the card with the number that comes up on the spinner hands that card to the other person. The game ends when someone has all the cards.

- Set up the transition matrix for this absorbing Markov chain, where the states correspond to the number of cards that Mary has.

- Find the fundamental matrix.

- On the average, how many moves will the game last?

If Mary deals, what is the probability that John will win the game?

Exercise \(\PageIndex{20}\)

Assume that an experiment has \(m\) equally probable outcomes. Show that the expected number of independent trials before the first occurrence of \(k\) consecutive occurrences of one of these outcomes is \((m^k - 1)/(m - 1)\). Hint: Form an absorbing Markov chain with states 1, 2, …, \(k\) with state \(i\) representing the length of the current run. The expected time until a run of \(k\) is 1 more than the expected time until absorption for the chain started in state 1. It has been found that, in the decimal expansion of pi, starting with the 24,658,601st digit, there is a run of nine 7’s. What would your result say about the expected number of digits necessary to find such a run if the digits are produced randomly?

Exercise \(\PageIndex{21}\)

Roberts \(^7\) ) A city is divided into 3 areas 1, 2, and 3. It is estimated that amounts \(u_1, u_2\), and \(u_3\) of pollution are emitted each day from these three areas. A fraction \(q_{i j}\) of the pollution from region \(i\) ends up the next day at region \(j\). A fraction \(q_i=1-\sum_j q_{i j}>0\) goes into the atmosphere and escapes. Let \(w_i^{(n)}\) be the amount of pollution in area \(i\) after \(n\) days.

- Show that \(\mathbf{w}^{(n)}=\mathbf{u}+\mathbf{u} \mathbf{Q}+\cdots+\mathbf{u} \mathbf{Q}^{n-1}\).

- Show that \(\mathbf{w}^{(n)} \rightarrow \mathbf{w}\), and show how to compute \(\mathbf{w}\) from \(\mathbf{u}\).

- The government wants to limit pollution levels to a prescribed level by prescribing w. Show how to determine the levels of pollution \(\mathbf{u}\) which would result in a prescribed limiting value \(\mathbf{w}\).

Exercise \(\PageIndex{22}\)

In the Leontief economic model,8 there are \(n\) industries 1, 2, …, \(n\). The \(i\)th industry requires an amount \(0 \leq q_{ij} \leq 1\) of goods (in dollar value) from company \(j\) to produce 1 dollar’s worth of goods. The outside demand on the industries, in dollar value, is given by the vector \(\mathbf{d} = (d_1,d_2,\ldots,d_n)\). Let \(\mathbf{Q}\) be the matrix with entries \(q_{ij}\).

- Show that if the industries produce total amounts given by the vector \(\mathbf{x} = (x_1,x_2,\ldots,x_n)\) then the amounts of goods of each type that the industries will need just to meet their internal demands is given by the vector \(\mathbf{x} \mathbf{Q}\).

- Show that in order to meet the outside demand \(\mathbf{d}\) and the internal demands the industries must produce total amounts given by a vector \(\mathbf{x} = (x_1,x_2,\ldots,x_n)\) which satisfies the equation \(\mathbf{x} = \mathbf{x} \mathbf{Q} + \mathbf{d}\).

- Show that if \(\mathbf{Q}\) is the \(\mathbf{Q}\)-matrix for an absorbing Markov chain, then it is possible to meet any outside demand \(\mathbf{d}\).

- Assume that the row sums of \(\mathbf{Q}\) are less than or equal to 1. Give an economic interpretation of this condition. Form a Markov chain by taking the states to be the industries and the transition probabilites to be the \(q_{ij}\). Add one absorbing state 0. Define \[q_{i0} = 1 - \sum_j q_{ij}\ .\] Show that this chain will be absorbing if every company is either making a profit or ultimately depends upon a profit-making company.

- Define \(\mathbf{x} \mathbf{c}\) to be the gross national product. Find an expression for the gross national product in terms of the demand vector \(\mathbf{d}\) and the vector \(\mathbf{t}\) giving the expected time to absorption.

Exercise \(\PageIndex{23}\)

A gambler plays a game in which on each play he wins one dollar with probability \(p\) and loses one dollar with probability \(q = 1 - p\). The is the problem of finding the probability \(w_x\) of winning an amount \(T\) before losing everything, starting with state \(x\). Show that this problem may be considered to be an absorbing Markov chain with states 0, 1, 2, …, \(T\) with 0 and \(T\) absorbing states. Suppose that a gambler has probability \(p = .48\) of winning on each play. Suppose, in addition, that the gambler starts with 50 dollars and that \(T = 100\) dollars. Simulate this game 100 times and see how often the gambler is ruined. This estimates \(w_{50}\).

Exercise \(\PageIndex{24}\)

Show that \(w_x\) of Exercise [exer 11.2.22] satisfies the following conditions:

- \(w_x = pw_{x + 1} + qw_{x - 1}\) for \(x = 1\), 2, …, \(T - 1\).

- \(w_0 = 0\).

- \(w_T = 1\).

Show that these conditions determine \(w_x\). Show that, if \(p = q = 1/2\), then \[w_x = \frac xT\] satisfies (a), (b), and (c) and hence is the solution. If \(p \ne q\), show that \[w_x = \frac{(q/p)^x - 1}{(q/p)^T - 1}\] satisfies these conditions and hence gives the probability of the gambler winning.

Exercise \(\PageIndex{25}\)

Write a program to compute the probability \(w_x\) of Exercise [exer 11.2.23] for given values of \(x\), \(p\), and \(T\). Study the probability that the gambler will ruin the bank in a game that is only slightly unfavorable, say \(p = .49\), if the bank has significantly more money than the gambler.

Exercise \(\PageIndex{26}\)

We considered the two examples of the Drunkard’s Walk corresponding to the cases \(n = 4\) and \(n = 5\) blocks (see Example \(\PageIndex{1}\)). Verify that in these two examples the expected time to absorption, starting at \(x\), is equal to \(x(n - x)\). See if you can prove that this is true in general. : Show that if \(f(x)\) is the expected time to absorption then \(f(0) = f(n) = 0\) and \[f(x) = (1/2)f(x - 1) + (1/2)f(x + 1) + 1\] for \(0 < x < n\). Show that if \(f_1(x)\) and \(f_2(x)\) are two solutions, then their difference \(g(x)\) is a solution of the equation \[g(x) = (1/2)g(x - 1) + (1/2)g(x + 1)\ .\] Also, \(g(0) = g(n) = 0\). Show that it is not possible for \(g(x)\) to have a strict maximum or a strict minimum at the point \(i\), where \(1 \le i \le n-1\). Use this to show that \(g(i) = 0\) for all i. This shows that there is at most one solution. Then verify that the function \(f(x) = x(n-x)\) is a solution.

Exercise \(\PageIndex{27}\)

Consider an absorbing Markov chain with state space \(S\). Let \(f\) be a function defined on \(S\) with the property that \[f(i) = \sum_{j \in S} p_{ij} f(j)\ ,\] or in vector form \[\mathbf{f} = \mathbf{Pf}\ .\] Then \(f\) is called a for \(\mathbf{P}\). If you imagine a game in which your fortune is \(f(i)\) when you are in state \(i\), then the harmonic condition means that the game is in the sense that your expected fortune after one step is the same as it was before the step.

- Show that for \(f\) harmonic \[\mathbf{f} = \mathbf{P}^n{\mathbf{f}}\] for all \(n\).

- Show, using (a), that for \(f\) harmonic \[\mathbf{P}^{\infty}=\lim _{n \rightarrow \infty} \mathbf{P}^n=\left(\begin{array}{c|c}

\mathbf{0} & \mathbf{B} \\

\hline \mathbf{0} & \mathbf{I}

\end{array}\right)\] - Using (b), prove that when you start in a transient state \(i\) your expected final fortune \[\sum_k b_{ik} f(k)\] is equal to your starting fortune \(f(i)\). In other words, a fair game on a finite state space remains fair to the end. (Fair games in general are called Fair games on infinite state spaces need not remain fair with an unlimited number of plays allowed. For example, consider the game of Heads or Tails (see Example [exam 1.3]). Let Peter start with 1 penny and play until he has 2. Then Peter will be sure to end up 1 penny ahead.)

Exercise \(\PageIndex{28}\)

A coin is tossed repeatedly. We are interested in finding the expected number of tosses until a particular pattern, say \(B = HTH\), occurs for the first time. If, for example, the outcomes of the tosses are \(HHTTHTH\) we say that the pattern \(B\) has occurred for the first time after 7 tosses. Let \(T^B\) be the time to obtain pattern \(B\) for the first time. Li9 gives the following method for determining \(E(T^B)\).

We are in a casino and, before each toss of the coin, a gambler enters, pays 1 dollar to play, and bets that the pattern \(B = HTH\) will occur on the next three tosses. If \(H\) occurs, he wins 2 dollars and bets this amount that the next outcome will be \(T\). If he wins, he wins 4 dollars and bets this amount that \(H\) will come up next time. If he wins, he wins 8 dollars and the pattern has occurred. If at any time he loses, he leaves with no winnings.

Let \(A\) and \(B\) be two patterns. Let \(AB\) be the amount the gamblers win who arrive while the pattern \(A\) occurs and bet that \(B\) will occur. For example, if \(A = HT\) and \(B = HTH\) then \(AB\) = 2 + 4 = 6 since the first gambler bet on H and won 2 dollars and then bet on \(T\) and won 4 dollars more. The second gambler bet on \(H\) and lost. If \(A = HH\) and \(B = HTH\), then \(AB\) = 2 since the first gambler bet on \(H\) and won but then bet on \(T\) and lost and the second gambler bet on \(H\) and won. If \(A = B = HTH\) then \(AB = BB\) = 8 + 2 = 10.

Now for each gambler coming in, the casino takes in 1 dollar. Thus the casino takes in \(T^B\) dollars. How much does it pay out? The only gamblers who go off with any money are those who arrive during the time the pattern B occurs and they win the amount BB. But since all the bets made are perfectly fair bets, it seems quite intuitive that the expected amount the casino takes in should equal the expected amount that it pays out. That is, \(E(T^B) = BB\).

Since we have seen that for \(B = HTH, BB\) = 10, the expected time to reach the pattern \(HTH\) for the first time is 10. If we had been trying to get the pattern \(B = HHH\), then \(BB\) = 8 + 4 + 2 = 14 since all the last three gamblers are paid off in this case. Thus the expected time to get the pattern \(HHH\) is 14. To justify this argument, Li used a theorem from the theory of martingales (fair games).

We can obtain these expectations by considering a Markov chain whose states are the possible initial segments of the sequence \(HTH\); these states are \(HTH, HT, H\), and \(\emptyset\), where \(\emptyset\) is the empty set. Then, for this example, the transition matrix is

\[\mathbf{N}= \begin{matrix} & \begin{matrix}HTH&HT&H&0\end{matrix} \\\begin{matrix}HTH\\HT\\H\\0\end{matrix} & \begin{pmatrix}

1 & 0 & 0 & 0 \\

.5 & 0 & 0 & .5 \\

0 & .5 & .5 & 0 \\

0 & 0 & .5 & .5

\end{pmatrix}\\\end{matrix}\]

Show, using the associated Markov chain, that the values \(E(T^B)\) = 10 and \(E(T^B)\) = 14 are correct for the expected time to reach the patterns \(HTH\) and \(HHH\), respectively.

Exercise \(\PageIndex{29}\)

We can use the gambling interpretation given in Exercise \(\PageIndex{28}\) to find the expected number of tosses required to reach pattern B when we start with pattern A. To be a meaningful problem, we assume that pattern A does not have pattern B as a subpattern. Let \(E_A(T^B)\) be the expected time to reach pattern B starting with pattern A. We use our gambling scheme and assume that the first k coin tosses produced the pattern A. During this time, the gamblers made an amount AB. The total amount the gamblers will have made when the pattern B occurs is BB. Thus, the amount that the gamblers made after the pattern A has occurred is BB - AB. Again by the fair game argument, \(E_A(T^B)\) = BB-AB.

For example, suppose that we start with pattern A = HT and are trying to get the pattern B = HTH. Then we saw in Exercise [exer 11.2.26] that AB = 4 and BB = 10 so \(E_A(T ^B)\) = BB-AB= 6.

Verify that this gambling interpretation leads to the correct answer for all starting states in the examples that you worked in Exercise [exer 11.2.26].

Exercise \(\PageIndex{30}\)

Here is an elegant method due to Guibas and Odlyzko10 to obtain the expected time to reach a pattern, say HTH, for the first time. Let \(f(n)\) be the number of sequences of length \(n\) which do not have the pattern HTH. Let \(f_p(n)\) be the number of sequences that have the pattern for the first time after \(n\) tosses. To each element of \(f(n)\), add the pattern HTH. Then divide the resulting sequences into three subsets: the set where HTH occurs for the first time at time \(n + 1\) (for this, the original sequence must have ended with HT); the set where HTH occurs for the first time at time \(n + 2\) (cannot happen for this pattern); and the set where the sequence HTH occurs for the first time at time \(n + 3\) (the original sequence ended with anything except HT). Doing this, we have \[f(n) = f_p(n + 1) + f_p(n + 3)\ .\] Thus, \[\frac{f(n)}{2^n} = \frac{2f_p(n + 1)}{2^{n + 1}} + \frac{2^3f_p(n + 3)}{2^{n + 3}}\ .\] If \(T\) is the time that the pattern occurs for the first time, this equality states that \[P(T > n) = 2P(T = n + 1) + 8P(T = n + 3)\ .\] Show that if you sum this equality over all \(n\) you obtain \[\sum_{n = 0}^\infty P(T > n) = 2 + 8 = 10\ .\] Show that for any integer-valued random variable \[E(T) = \sum_{n = 0}^\infty P(T > n)\ ,\] and conclude that \(E(T) = 10\). Note that this method of proof makes very clear that \(E(T)\) is, in general, equal to the expected amount the casino pays out and avoids the martingale system theorem used by Li.

Exercise \(\PageIndex{31}\)

In Example [exam 11.1.9], define \(f(i)\) to be the proportion of G genes in state \(i\). Show that \(f\) is a harmonic function (see Exercise [exer 11.2.29]). Why does this show that the probability of being absorbed in state \((\mbox{GG},\mbox{GG})\) is equal to the proportion of G genes in the starting state? (See Exercise \(\PageIndex{17}\).)

Exercise \(\PageIndex{32}\)

Show that the stepping stone model (Example 11.1.12) is an absorbing Markov chain. Assume that you are playing a game with red and green squares, in which your fortune at any time is equal to the proportion of red squares at that time. Give an argument to show that this is a fair game in the sense that your expected winning after each step is just what it was before this step.: Show that for every possible outcome in which your fortune will decrease by one there is another outcome of exactly the same probability where it will increase by one.

Use this fact and the results of Exercise \(\PageIndex{27}\) to show that the probability that a particular color wins out is equal to the proportion of squares that are initially of this color.

Exercise \(\PageIndex{33}\)

Consider a random walker who moves on the integers 0, 1, …, \(N\), moving one step to the right with probability \(p\) and one step to the left with probability \(q = 1 - p\). If the walker ever reaches 0 or \(N\) he stays there. (This is the Gambler’s Ruin problem of Exercise \(\PageIndex{23}\).) If \(p = q\) show that the function

\[f(i) = i\]

is a harmonic function (see Exercise \(\PageIndex{27}\)), and if \(p \ne q\) then

\[f(i) = \biggl(\frac {q}{p}\biggr)^i\]

is a harmonic function. Use this and the result of Exercise \(\PageIndex{27}\) to show that the probability \(b_{iN}\) of being absorbed in state \(N\) starting in state \(i\) is

\[ b_{i N}=\left\{\begin{array}{cl}

\frac{i}{N}, & \text { if } p=q \\

\frac{\left(\frac{q}{p}\right)^i-1}{\left(\frac{q}{p}\right)^N-1}, & \text { if } p \neq q

\end{array}\right. \]

For an alternative derivation of these results see Exercise \(\PageIndex{24}\).

Exercise \(\PageIndex{34}\)

Complete the following alternate proof of Theorem [thm 11.2.3]. Let \(s_i\) be a transient state and \(s_j\) be an absorbing state. If we compute \(b_{ij}\) in terms of the possibilities on the outcome of the first step, then we have the equation \[b_{ij} = p_{ij} + \sum_k p_{ik} b_{kj}\ ,\] where the summation is carried out over all transient states \(s_k\). Write this in matrix form, and derive from this equation the statement \[\mathbf{B} = \mathbf{N}\mathbf{R}\ .\]

Exercise \(\PageIndex{35}\)

In Monte Carlo roulette (see Example [exam 6.1.5]), under option (c), there are six states (\(S\), \(W\), \(L\), \(E\), \(P_1\), and \(P_2\)). The reader is referred to Figure [fig 6.1.5], which contains a tree for this option. Form a Markov chain for this option, and use the program AbsorbingChain to find the probabilities that you win, lose, or break even for a 1 franc bet on red. Using these probabilities, find the expected winnings for this bet. For a more general discussion of Markov chains applied to roulette, see the article of H. Sagan referred to in Example [exam 6.7].

Exercise \(\PageIndex{36}\)

We consider next a game called by its inventor W. Penney.11 There are two players; the first player picks a pattern A of H’s and T’s, and then the second player, knowing the choice of the first player, picks a different pattern B. We assume that neither pattern is a subpattern of the other pattern. A coin is tossed a sequence of times, and the player whose pattern comes up first is the winner. To analyze the game, we need to find the probability \(p_A\) that pattern A will occur before pattern B and the probability \(p_B = 1- p_A\) that pattern B occurs before pattern A. To determine these probabilities we use the results of Exercises [exer 11.2.26] and [exer 11.2.27]. Here you were asked to show that, the expected time to reach a pattern B for the first time is, \[E(T^B) = BB\ ,\] and, starting with pattern A, the expected time to reach pattern B is \[E_A(T^B) = BB - AB\ .\]

- and thus \[BB = E(T^{A\ {\rm or}\ B}) + p_A (BB - AB)\ .\] Interchange A and B to find a similar equation involving the \(p_B\). Finally, note that \[p_A + p_B = 1\ .\] Use these equations to solve for \(p_A\) and \(p_B\).

- Assume that both players choose a pattern of the same length k. Show that, if \(k = 2\), this is a fair game, but, if \(k = 3\), the second player has an advantage no matter what choice the first player makes. (It has been shown that, for \(k \geq 3\), if the first player chooses \(a_1\), \(a_2\), …, \(a_k\), then the optimal strategy for the second player is of the form \(b\), \(a_1\), …, \(a_{k - 1}\) where \(b\) is the better of the two choices H or T.13)