5.2: Joint Distributions of Continuous Random Variables

- Page ID

- 3273

Having considered the discrete case, we now look at joint distributions for continuous random variables.

Definition \(\PageIndex{1}\)

If continuous random variables \(X\) and \(Y\) are defined on the same sample space \(S\), then their joint probability density function (joint pdf) is a piecewise continuous function, denoted \(f(x,y)\), that satisfies the following.

- \(f(x,y)\geq0\), for all \((x,y)\in\mathbb{R}^2\)

- \(\displaystyle{\iint\limits_{\mathbb{R}^2}\! f(x,y)\, dx\, dy = 1}\)

- \(\displaystyle{P((X,Y)\in A) = \iint\limits_A\! f(x,y)\, dx\, dy}\), for any \(A\subseteq\mathbb{R}^2\)

The first two conditions in Definition 5.2.1 provide the requirements for a function to be a valid joint pdf. The third condition indicates how to use a joint pdf to calculate probabilities. As an example of applying the third condition in Definition 5.2.1, the joint cdf for continuous random variables \(X\) and \(Y\) is obtained by integrating the joint density function over a set \(A\) of the form

$$A = \{(x,y)\in\mathbb{R}^2\ |\ X\leq a\ \text{and}\ Y\leq b\},\notag$$

where \(a\) and \(b\) are constants. Specifically, if \(A\) is given as above, then the joint cdf of \(X\) and \(Y\), at the point \((a,b)\), is given by

$$F(a,b) = P(X\leq a\ \text{and}\ Y\leq b) = \int\limits^b_{-\infty}\int\limits^a_{-\infty}\! f(x,y)\, dx\, dy.\notag$$

Note that probabilities for continuous jointly distributed random variables are now volumes instead of areas as in the case of a single continuous random variable.

As in the discrete case, we can also obtain the individual, maginal pdf's of \(X\) and \(Y\) from the joint pdf.

Definition \(\PageIndex{2}\)

Suppose that continuous random variables \(X\) and \(Y\) have joint density function \(f(x,y)\). The marginal pdf's of \(X\) and \(Y\) are respectively given by the following.

\begin{align*}

f_X(x) &= \int\limits^{\infty}_{-\infty}\! f(x, y)\,dy \quad(\text{fix a value of}\ X,\ \text{and integrate over all possible values of}\ Y) \\

f_Y(y) &= \int\limits^{\infty}_{-\infty}\! f(x, y)\,dx \quad(\text{fix a value of}\ Y,\ \text{and integrate over all possible values of}\ X)

\end{align*}

Example \(\PageIndex{1}\)

Suppose a radioactive particle is contained in a unit square. We can define random variables \(X\) and \(Y\) to denote the \(x\)- and \(y\)-coordinates of the particle's location in the unit square, with the bottom left corner placed at the origin. Radioactive particles follow completely random behavior, meaning that the particle's location should be uniformly distributed over the unit square. This implies that the joint density function of \(X\) and \(Y\) should be constant over the unit square, which we can write as

$$f(x,y) = \left\{\begin{array}{l l}

c, & \text{if}\ 0\leq x\leq 1\ \text{and}\ 0\leq y\leq 1 \\

0, & \text{otherwise},

\end{array}\right.\notag$$

where \(c\) is some unknown constant. We can find the value of \(c\) by using the first condition in Definition 5.2.1 and solving the following:

$$\iint\limits_{\mathbb{R}^2}\! f(x,y)\, dx\, dy = 1 \quad\Rightarrow\quad \int\limits^1_0\!\int\limits^1_0\! c\, dx\, dy = 1 \quad\Rightarrow\quad c \int\limits^1_0\!\int\limits^1_0\! 1\, dx\, dy = 1 \quad\Rightarrow\quad c=1\notag$$

We can now use the joint pdf of \(X\) and \(Y\) to compute probabilities that the particle is in some specific region of the unit square. For example, consider the region

$$A = \{(x,y)\ |\ x-y > 0.5\},\notag$$

which is graphed in Figure 1 below.

If we want the probability that the particle's location is in the lower right corner of the unit square that intersects with the region \(A\), then we integrate the joint density function over that portion of \(A\) in the unit square, which gives the following probability:

$$P(X-Y>0.5) = \iint\limits_A\! f(x,y)\, dx\, dy = \int^{0.5}_0\! \int^{1}_{y+0.5}\! 1\, dx\, dy = 0.125\notag$$

Lastly, we apply Definition 5.2.2 to find the marginal pdf's of \(X\) and \(Y\).

\begin{align*}

f_X(x) &= \int\limits^1_0\! 1\, dy = 1, \quad\text{for}\ 0\leq x\leq 1 \\

f_Y(y) &= \int\limits^1_0\! 1\, dx = 1, \quad\text{for}\ 0\leq y\leq 1

\end{align*}

Note that both \(X\) and \(Y\) are individually uniform random variables, each over the interval \([0,1]\). This should not be too surprising. Given that the particle's location was uniformly distributed over the unit square, we should expect that the individual coordinates would also be uniformly distributed over the unit intervals.

Example \(\PageIndex{2}\)

At a particular gas station, gasoline is stocked in a bulk tank each week. Let random variable \(X\) denote the proportion of the tank's capacity that is stocked in a given week, and let \(Y\) denote the proportion of the tank's capacity that is sold in the same week. Note that the gas station cannot sell more than what was stocked in a given week, which implies that the value of \(Y\) cannot exceed the value of \(X\). A possible joint pdf of \(X\) and \(Y\) is given by

$$f(x,y) = \left\{\begin{array}{l l}

3x, & \text{if}\ 0\leq y \leq x\leq 1 \\

0, & \text{otherwise.}

\end{array}\right.\notag$$

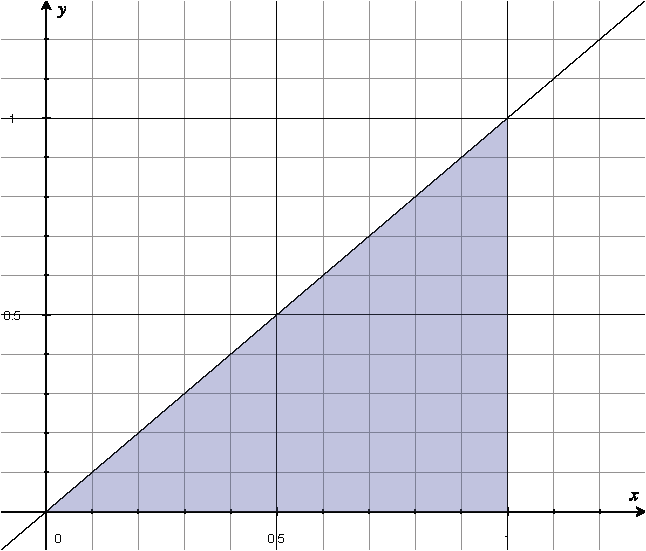

Note that this function is only nonzero over the triangular region given by \(\{(x,y)\ |\ 0\leq y\leq x \leq 1\}\), which is graphed in Figure 2 below:

Figure 2: Region over which joint pdf \(f(x,y)\) is nonzero.

Link to Video: Marginal PDFs for Example 5.2.2

We find the joint cdf of \(X\) and \(Y\) at the point \((x,y) = (1/2, 1/3)\):

\begin{align*}

F\left(\frac{1}{2},\frac{1}{3}\right) = P\left(X\leq\frac{1}{2} \text{ and } Y\leq\frac{1}{3}\right) &= \int^{1/3}_0\int^{0.5}_y\! 3x\, dxdy\\

&=\int^{1/3}_0\! \left(\frac{3}{2}x^2\Big|^{0.5}_y\right)\,dy = \int^{1/3}_0 \!\left(\frac{3}{8} - \frac{3}{2}y^2\right)\,dy\\

&=\frac{3}{8}y-\frac{1}{2}y^3\Big|^{1/3}_0 \approx 0.1065

\end{align*}

Thus, there is a 10.65% chance that less than half the tank is stocked and less than a third of the tank is sold in a given week. Note that in finding the above integral, we look at where the region given by \(\{(x,y)\ |\ x\leq1/2, y\leq1/3\}\) intersects the region over which the joint pdf is nonzero, i.e., the region graphed in Figure 2. This tells us what the limits of integration are in the double integral. Figure 3 below is a graph of the intersection made on desmos.com:

Figure 3: Intersection of \(\{(x,y)\ |\ x\leq1/2, y\leq1/3\}\) with the region over which joint pdf \(f(x,y)\) is nonzero.

Next, we find the probability that the amount of gas sold is less than half the amount that is stocked in a given week. In other words, we find \(P(Y < 0.5X)\). In order to find this probability, we need to find the region over which we will integrate the joint pdf. To do this, look for the intersection of the region given by \(\{(x,y)\ |\ y < 0.5x\}\) with the region in Figure 2, which is graphed in Figure 4 below:

Figure 4: Intersection of \(\{(x,y)\ |\ y < 0.5x\}\) with the region over which joint pdf \(f(x,y)\) is nonzero.

The calculation is as follows:

\begin{align*}

P(Y<0.5X) &= \int^1_0\int^{0.5x}_0\! 3x\, dydx\\

&= \int^1_0 \!\left(3xy\Big|^{0.5x}_0\right) \,dx\\

&= \int^1_0 \!\left(\frac{3}{2}x^2-0\right) \,dx = \frac{1}{2}x^3\Big|^1_0\\

&=\frac{1}{2}

\end{align*}

Thus, there is a 50% chance that the amount of gas sold in a given week is less than half of the gas stocked.

Expectations of Functions of Jointly Distributed Continuous Random Variables

As we did in the discrete case of jointly distributed random variables, we can also look at the expected value of jointly distributed continuous random variables. Again we focus on the expected value of functions applied to the pair \((X, Y)\), since expected value is defined for a single quantity. At this point, it should not surprise you that the following theorem is similar to Theorem 5.1.1, the result in the discrete setting, except the sums have been replaced by integrals.

Theorem \(\PageIndex{1}\)

Suppose that \(X\) and \(Y\) are jointly distributed continuous random variables with joint pdf \(f(x,y)\).

If \(g(X,Y)\) is a function of these two random variables, then its expected value is given by the following:

$$\text{E}[g(X,Y)] = \iint\limits_{\mathbb{R}^2}\!g(x,y)f(x,y)\,dxdy\notag$$

We will give an example applying Theorem 5.2.1 in an example below.

Independent Random Variables

We can also define independent random variables in the continuous case, just as we did for discrete random variables.

Definition \(\PageIndex{3}\)

Continuous random variables \(X_1, X_2, \ldots, X_n\) are independent if the joint pdf factors into a product of the marginal pdf's:

$$f(x_1, x_2, \ldots, x_n) = f_{X_1}(x_1)\cdot f_{X_2}(x_2) \cdots f_{X_n}(x_n).\notag$$

It is equivalent to check that this condition holds for the cumulative distribution functions.

Example \(\PageIndex{3}\)

Consider the continuous random variables defined in Example 5.2.1, where the \(X\) and \(Y\) gave the location of a radioactive particle. We will show that \(X\) and \(Y\) are independent and then verify that Theorem 5.1.2 also applies in the continuous setting.

Recall that we found the marginal pdf's to be the following:

\begin{align*}

f_X(x) &= 1,\ \text{for}\ 0\leq x\leq1 \\

f_Y(y) &= 1,\ \text{for}\ 0\leq y\leq 1

\end{align*}

So, for \((x,y)\) in the unit square, i.e., \(0\leq x\leq1\) and \(0\leq y\leq \), we have

$$f(x,y) = 1 = 1\cdot1 =f_X(x)f_Y(y),\notag$$

and outside the unit square, at least one of marginal pdf's will be \(0\), so

$$f(x,y) = 0 = f_X(x)f_Y(y).\notag$$

We have thus shown that \(f(x,y)=f_X(x)\ f_Y(y)\), for all \((x,y)\in \mathbb{R}^2\), and so by Definition 5.2.3, \(X\) and \(Y\) are independent.

Now let's look at the expected value of the product of \(X\) and \(Y\). To compute this we apply Theorem 5.2.1:

$$\text{E}[XY] = \iint_{\mathbb{R}^2} \!xy\cdot f(x,y)\, dxdy = \int^1_0\int^1_0 \!xy\cdot1\, dxdy = \int^1_0 \!\left(\frac{x^2}{2}y\Big|^1_0\right)\, dy = \frac{1}{4}\notag$$ Note that both \(X\) and \(Y\) are uniform on the interval \([0,1]\). Therefore, their expected values are both 1/2, the midpoint of \([0,1]\). Putting this all together, we have

$$\text{E}[XY] = \frac{1}{4} = \frac{1}{2}\cdot\frac{1}{2} = \text{E}[X]\ \text{E}[Y],\notag$$

which is the conclusion to Theorem 5.1.2.