8.2.1: Inference for a Single Proportion

- Last updated

- Save as PDF

- Page ID

- 28760

According to a New York Times / CBS News poll in June 2012, only about 44% of the American public approves of the job the Supreme Court is doing.1 This poll included responses of 976 adults.

Identifying when the Sample Proportion is Nearly Normal

A sample proportion can be described as a sample mean. If we represent each "success" as a 1 and each "failure" as a 0, then the sample proportion is the mean of these numerical outcomes:

\[ \hat {p} = \dfrac {0 + 1 + 1 + \dots + 0}{976} = 0.44\]

The distribution of \(\hat {p}\) is nearly normal when the distribution of 0's and 1's is not too strongly skewed for the sample size. The most common guideline for sample size and skew when working with proportions is to ensure that we expect to observe a minimum number of successes and failures, typically at least 10 of each.

1nytimes.com/2012/06/08/us/politics/44-percent-of-americans-approve-of-supreme-court-in-new-poll.html

Conditions for the sampling distribution of \(\hat {p}\) being nearly normal

The sampling distribution for \(\hat {p}\), taken from a sample of size n from a population with a true proportion p, is nearly normal when

- the sample observations are independent and

- we expected to see at least 10 successes and 10 failures in our sample, i.e. \(np \ge 10\) and \(n(1 - p) \ge 10\). This is called the success-failure condition.

If these conditions are met, then the sampling distribution of \(\hat {p}\) is nearly normal with mean p and standard error

\[SE_{\hat {p}} = \sqrt {\dfrac {p(1 - p)}{n}} \label{6.1}\]

Typically we do not know the true proportion, \(p\), so must substitute some value to check conditions and to estimate the standard error. For confidence intervals, usually \(\hat {p}\) is used to check the success-failure condition and compute the standard error. For hypothesis tests, typically the null value - that is, the proportion claimed in the null hypothesis - is used in place of p. Examples are presented for each of these cases in Sections 6.1.2 and 6.1.3.

TIP: Reminder on checking independence of observations

If data come from a simple random sample and consist of less than 10% of the population, then the independence assumption is reasonable. Alternatively, if the data come from a random process, we must evaluate the independence condition more carefully.

Confidence Intervals for a Proportion

We may want a confidence interval for the proportion of Americans who approve of the job the Supreme Court is doing. Our point estimate, based on a sample of size n = 976 from the NYTimes/CBS poll, is \(\hat {p} = 0.44\). To use the general confidence interval formula from Section 4.5, we must check the conditions to ensure that the sampling distribution of \(\hat {p}\) is nearly normal. We also must determine the standard error of the estimate.

The data are based on a simple random sample and consist of far fewer than 10% of the U.S. population, so independence is confirmed. The sample size must also be sufficiently large, which is checked via the success-failure condition: there were approximately \(976 \times \hat {p} = 429\) "successes" and \(976 \times (1- \hat {p}) = 547\) "failures" in the sample, both easily greater than 10.

With the conditions met, we are assured that the sampling distribution of \(\hat {p}\) is nearly normal. Next, a standard error for \(\hat {p}\) is needed, and then we can employ the usual method to construct a confidence interval.

Exercise \(\PageIndex{1}\)

Estimate the standard error of \(\hat {p} = 0.44\) using Equation \ref{6.1}. Because \(p\) is unknown and the standard error is for a confidence interval, use \(\hat {p}\) in place of \(p\).

- Answer

-

\[SE = \sqrt {\dfrac {p(1- p)}{n}} \approx \sqrt {\dfrac {0.44(1-0.44)}{976}} = 0.016 \nonumber\]

Example \(\PageIndex{1}\)

Construct a 95% confidence interval for \(p\), the proportion of Americans who trust federal officials most of the time.

Solution

Using the standard error estimate from Exercise \(\PageIndex{1}\), the point estimate 0.44, and z* = 1:96 for a 95% confidence interval, the confidence interval may be computed as

\[ \text {point estimate} \pm z^*SE \rightarrow 0.44 \pm 1.96 \times 0.016 \rightarrow (0.409, 0.471)\]

We are 95% confident that the true proportion of Americans who approve of the job of the Supreme Court (in June 2012) is between 0.409 and 0.471. If the proportion has not changed since this poll, than we can say with high confidence that the job approval of the Supreme Court is below 50%.

Constructing a confidence interval for a proportion

- Verify the observations are independent and also verify the success-failure condition using \(\hat {p}\) and n.

- If the conditions are met, the sampling distribution of \(\hat {p}\) may be well-approximated by the normal model.

- Construct the standard error using \(\hat {p}\) in place of p and apply the general confidence interval formula.

Hypothesis Testing for a Proportion

To apply the normal distribution framework in the context of a hypothesis test for a proportion, the independence and success-failure conditions must be satisfied. In a hypothesis test, the success-failure condition is checked using the null proportion: we verify \(np_0\) and \(n(1 - p_0)\) are at least 10, where \(p_0\) is the null value.

Exercise \(\PageIndex{2}\)

Deborah Toohey is running for Congress, and her campaign manager claims she has more than 50% support from the district's electorate. Set up a onesided hypothesis test to evaluate this claim.

- Answer

-

Is there convincing evidence that the campaign manager is correct?

- H0 : p = 0.50,

- HA : p > 0.50.

Example \(\PageIndex{2}\)

A newspaper collects a simple random sample of 500 likely voters in the district and estimates Toohey's support to be 52%. Does this provide convincing evidence for the claim of Toohey's manager at the 5% significance level?

Solution

Because this is a simple random sample that includes fewer than 10% of the population, the observations are independent. In a one-proportion hypothesis test, the success-failure condition is checked using the null proportion,

\[p_0 = 0.5: np_0 = n(1 - p_0) = 500 \times 0.5 = 250 > 10.\]

With these conditions verified, the normal model may be applied to \(\hat {p}\).

Next the standard error can be computed. The null value is used again here, because this is a hypothesis test for a single proportion.

\[ \begin{align*} SE &= \sqrt {\dfrac {p_0 \times (1 - p_0)}{n}} \\[5pt] &= \sqrt {\dfrac {0.5 (1 - 0.5)}{500}} = 0.022 \end{align*}\]

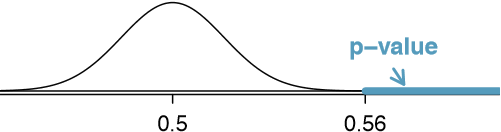

A picture of the normal model is shown in Figure \(\PageIndex{1}\) with the p-value represented by the shaded region. Based on the normal model, the test statistic can be computed as the Z score of the point estimate:

\[ \begin{align*} Z &= \dfrac {\text {point estimate - null value}}{SE} \\[5pt] &= \dfrac {0.52 - 0.50}{0.022} = 0.89 \end{align*}\]

The upper tail area, representing the p-value, is 0.1867. Because the p-value is larger than 0.05, we do not reject the null hypothesis, and we do not find convincing evidence to support the campaign manager's claim.

Hypothesis test for a proportion

Set up hypotheses and verify the conditions using the null value, \(p_0\), to ensure \(\hat {p}\) is nearly normal under H0. If the conditions hold, construct the standard error, again using p0, and show the p-value in a drawing. Lastly, compute the p-value and evaluate the hypotheses.

Choosing a sample size when estimating a proportion

We first encountered sample size computations in Section 4.6, which considered the case of estimating a single mean. We found that these computations were helpful in planning a study to control the size of the standard error of a point estimate. The task was to find a sample size n so that the sample mean would be within some margin of error m of the actual mean with a certain level of confidence. For example, the margin of error for a point estimate using 95% confidence can be written as \(1.96 \times SE\). We set up a general equation to represent the problem:

\[ME = z^*SE \le m\]

where ME represented the actual margin of error and \(z^*\) was chosen to correspond to the confidence level. The standard error formula is specified to correspond to the particular setting. For instance, in the case of means, the standard error was given as \(\dfrac {\sigma}{\sqrt {n}}\). In the case of a single proportion, we use \(\sqrt {p(1 - p)}{n}\) for the standard error.

Planning a sample size before collecting data is equally important when estimating a proportion. For instance, if we are conducting a university survey to determine whether students support a $200 per year increase in fees to pay for a new football stadium, how big of a sample is needed to be sure the margin of error is less than 0.04 using a 95% confidence level?

Example \(\PageIndex{3}\)

Find the smallest sample size n so that the margin of error of the point estimate \(\hat {p}\) will be no larger than \(m = 0.04\) when using a 95% confidence interval.

Solution

For a 95% confidence level, the value z* corresponds to 1.96, and we can write the margin of error expression as follows:

\[ME = z^*SE = 1.96 \times \sqrt {\dfrac {p(1 - p)}{n}} \le 0.04\]

There are two unknowns in the equation: p and n. If we have an estimate of p, perhaps from a similar survey, we could use that value. If we have no such estimate, we must use some other value for p. It turns out that the margin of error is largest when p is 0.5, so we typically use this worst case estimate if no other estimate is available:

\[1.96 \times \sqrt {\dfrac {0.5(1 - 0.5)}{n}} \le 0.04\]

\[1.96^2 \times \dfrac {0.5(1 - 0.5)}{n} \le 0.04^2\]

\[1.96^2 \times \dfrac {0.5(1 - 0.5)}{0.04^2} \le n\]

\[600.25 \le n\]

We would need at least 600.25 participants, which means we need 601 participants or more, to ensure the sample proportion is within 0.04 of the true proportion with 95% confidence.

No estimate of the true proportion is required in sample size computations for a proportion, whereas an estimate of the standard deviation is always needed when computing a sample size for a margin of error for the sample mean. However, if we have an estimate of the proportion, we should use it in place of the worst case estimate of the proportion, 0.5.

Example \(\PageIndex{4}\)

A manager is about to oversee the mass production of a new tire model in her factory, and she would like to estimate what proportion of these tires will be rejected through quality control. The quality control team has monitored the last three tire models produced by the factory, failing 1.7% of tires in the first model, 6.2% of the second model, and 1.3% of the third model. The manager would like to examine enough tires to estimate the failure rate of the new tire model to within about 2% with a 90% confidence level.

- There are three different failure rates to choose from. Perform the sample size computation for each separately, and identify three sample sizes to consider.

- The sample sizes in (b) vary widely. Which of the three would you suggest using? What would influence your choice?

Solution

(a) For the 1.7% estimate of p, we estimate the appropriate sample size as follows:

\[1.65 \times \sqrt {\dfrac {p(1 - p)}{n}} \approx 1.65 \times \sqrt {\dfrac {0.017(1 - 0.017)}{n}} \le 0.02 \rightarrow n \ge 113.7 \nonumber\]

Using the estimate from the first model, we would suggest examining 114 tires (round up!). A similar computation can be accomplished using 0.062 and 0.013 for p: 396 and 88.

(b) We could examine which of the old models is most like the new model, then choose the corresponding sample size. Or if two of the previous estimates are based on small samples while the other is based on a larger sample, we should consider the value corresponding to the larger sample. (Answers will vary.)

Exercise \(\PageIndex{4}\)

A recent estimate of Congress' approval rating was 17%.5 What sample size does this estimate suggest we should use for a margin of error of 0.04 with 95% confidence?

- Answer

-

We complete the same computations as before, except now we use 0.17 instead of 0.5 for p: \[1.96 \times \sqrt {\dfrac {p(1 - p)}{n}} \approx 1.96 \times \sqrt {\dfrac {0.17(1 - 0.17)}{n}} \le 0.04 \rightarrow n \ge 338.8 \nonumber \] A sample size of 339 or more would be reasonable.

Contributors

David M Diez (Google/YouTube), Christopher D Barr (Harvard School of Public Health), Mine Çetinkaya-Rundel (Duke University)